Last Updated on 22/04/2026 by Eran Feit

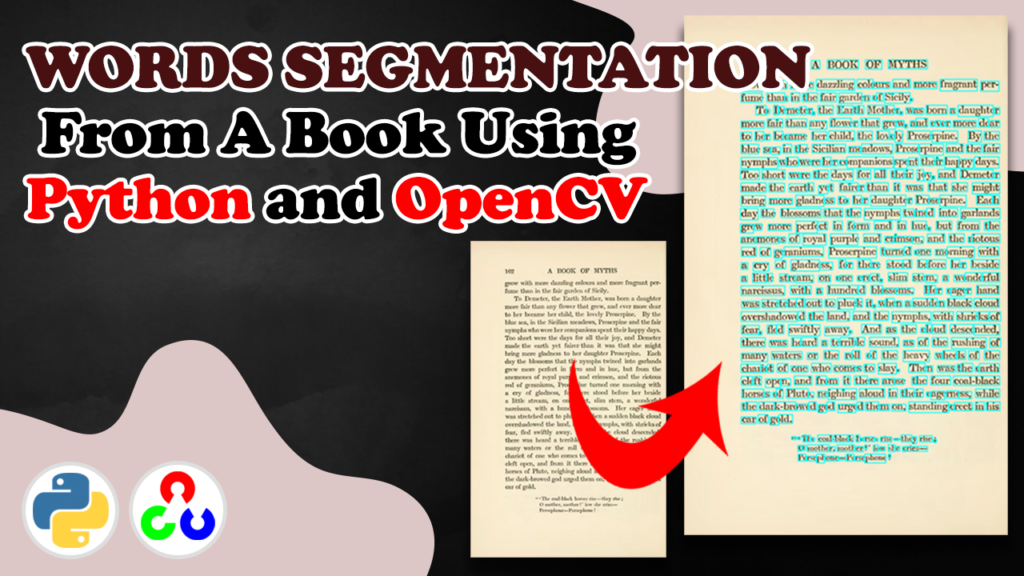

Introduction — Bringing Old Pages to Digital Life Optical Character Recognition (OCR) is a technology used to extract text from images which is used in applications like document digitization, license plate recognition and automated data entry.

If you’ve ever wanted to digitize a physical book or scanned document, you know that the hardest part is getting clean, readable text from noisy images.extract text from scanned pages using Python and OpenCV — focusing on how to preprocess, segment, and isolate each word before sending it to an OCR engine like Tesseract.

This post walks through a real working example that detects lines and words from a scanned book image.

By the end, you’ll understand how image preprocessing can dramatically improve OCR accuracy , how to segment words line-by-line , and how to visualize and save your segmented output using OpenCV.

OCR preprocessing stage for Tesseract or any OCR engine.

check out our video here : https://youtu.be/c61w6H8pdzs&list=UULFTiWJJhaH6BviSWKLJUM9sg

You can find the code here : https://ko-fi.com/s/d621f2eb2c

Master Computer Vision

Follow my latest tutorials and AI insights on my

Personal Blog .

Beginner Complete CV Bootcamp

Foundation using PyTorch & TensorFlow.

Get Started → Interactive Deep Learning with PyTorch

Hands-on practice in an interactive environment.

Start Learning → Advanced Modern CV: GPT & OpenCV4

Vision GPT and production-ready models.

Go Advanced → ### Import OpenCV for image processing and NumPy for array operations. import cv2 import numpy as np ### Read the input document image from disk. ### Provide a proper path to a scanned page or book photo. img = cv2 . imread ( ' Open-CV/Words-Segmentation/book2.jpg ' ) ### Define a function that prepares a binary mask where text is white on black. ### Steps: convert to grayscale, invert-binary threshold to flip text to white, smooth with Gaussian blur, and re-threshold to clean edges. def thresholding ( image ): ### Convert the BGR image to grayscale to simplify intensity operations. img_gray = cv2 . cvtColor ( image , cv2 . COLOR_BGR2GRAY ) ### Apply inverse binary threshold so darker text becomes white. ### Values below 170 turn to 255 (white), others to 0 (black). ret , thresh = cv2 . threshold ( img_gray , 170 , 255 , cv2 . THRESH_BINARY_INV ) ### Smooth the mask to merge small gaps between characters for stronger word blobs. thresh = cv2 . GaussianBlur ( thresh , ( 11 , 11 ), 0 ) ### Re-threshold the blurred mask to get a crisp binary image again. ret , thresh = cv2 . threshold ( thresh , 130 , 255 , cv2 . THRESH_BINARY ) ### Return the clean binary mask where words are white. return thresh ### Create the thresholded mask of the input image for downstream segmentation. thresh_img = thresholding ( img ) ### Visualize the binary mask to verify the text is white and background is black. cv2 . imshow ( " thresh_img " , thresh_img ) You can find the code here : https://ko-fi.com/s/d621f2eb2c

Explanation This part converts the image into a binary mask , a critical preprocessing step.

Summary: After this step, your scanned page becomes a clean black-and-white canvas where each word stands out as a bright region.

Detecting Text Lines with Morphological Operations Now that we have a binary image, we’ll detect text lines.horizontal dilation to merge characters into long line shapes, then find contours to extract their bounding boxes.

### Prepare containers and a rectangular kernel to detect whole text lines. linesArray = [] kernelRows = np . ones (( 5 , 40 ), np . uint8 ) ### Dilate horizontally to connect characters and words into long line components. dilated = cv2 . dilate ( thresh_img , kernelRows , iterations = 1 ) cv2 . imshow ( " dilated " , dilated ) ### Extract external contours which should correspond to line blobs after dilation. ( contoursRows , heirarchy ) = cv2 . findContours ( dilated . copy (), cv2 . RETR_EXTERNAL , cv2 . CHAIN_APPROX_SIMPLE ) ### Iterate over detected contours, filter by area, and store bounding boxes for candidate lines. for row in contoursRows : area = cv2 . contourArea ( row ) if area > 500 : x , y , w , h = cv2 . boundingRect ( row ) linesArray . append ([ x , y , w , h ]) ### Print how many line candidates were found as a quick sanity check. print ( len ( linesArray )) You can find the code here : https://ko-fi.com/s/d621f2eb2c

Explanation Here we use morphological dilation to emphasize horizontal structures in the image.

Summary: After this step, each text line is isolated, preparing for per-line word segmentation.

Segmenting Words within Each Line With line regions ready, we’ll now segment individual words.

### Sort lines from top to bottom using the y coordinate. sortedLinesArray = sorted ( linesArray , key =lambda line : line [ 1 ]) ### Prepare structures for word extraction and indexing. words = [] lineNumber = 0 all = [] ### Create a square kernel to connect characters into words without bridging adjacent lines. kernelWords = np . ones (( 7 , 7 ), np . uint8 ) ### Dilate the thresholded image with the word kernel to merge letters into word-sized blobs. dilateWordsImg = cv2 . dilate ( thresh_img , kernelWords , iterations = 1 ) cv2 . imshow ( " dilate Words Img " , dilateWordsImg ) ### For each detected line, focus on its region and find word contours inside it. for line in sortedLinesArray : x , y , w , h = line roi_line = dilateWordsImg [ y : y + h , x : x + w ] ( contoursWords , heirarchy ) = cv2 . findContours ( roi_line . copy (), cv2 . RETR_EXTERNAL , cv2 . CHAIN_APPROX_SIMPLE ) for word in contoursWords : x1 , y1 , w1 , h1 = cv2 . boundingRect ( word ) cv2 . rectangle ( img ,( x + x1 , y + y1 ),( x + x1 + w1 , y + y1 + h1 ),( 255 , 255 , 0 ), 2 ) words . append ([ x + x1 , y + y1 , x + x1 + w1 , y + y1 + h1 ]) You can find the code here : https://ko-fi.com/s/d621f2eb2c

Explanation Each line region is analyzed to locate words.

Summary: You now have a word-by-word breakdown of your scanned text, ready for OCR or further processing.

Visualizing and Saving the Segmented Output Finally, let’s inspect, extract, and save the results.

### Sort all accumulated words by the left x coordinate. sortedWords = sorted ( words , key =lambda line : line [ 0 ]) ### Build a list of (line_index, word_bbox) pairs representing a reading-order structure. for word in sortedWords : a = ( lineNumber , word ) all . append ( a ) ### Select an example word box and show it. chooseWord = all [ 3 ][ 1 ] print ( chooseWord ) roiWord = img [ chooseWord [ 1 ]: chooseWord [ 3 ], chooseWord [ 0 ]: chooseWord [ 2 ]] cv2 . imshow ( " Show a word " , roiWord ) ### Show and save final image with all rectangles. cv2 . imshow ( " Show the words " , img ) cv2 . imwrite ( " c:/temp/segmentedBook.png " , img ) ### Wait for a key press and close all GUI windows. cv2 . waitKey ( 0 ) cv2 . destroyAllWindows () You can find the code here : https://ko-fi.com/s/d621f2eb2c

Explanation The segmented image is displayed and saved for inspection or OCR.pytesseract to perform actual text recognition.

Summary: The full text layout is now digitized — each word isolated and ready for recognition.

FAQ What is thresholding in OpenCV? Thresholding converts a grayscale image into a binary one, highlighting text regions for easier detection.

Why use Gaussian blur before segmentation? It smooths small gaps between characters so letters merge into words more reliably.

Dilation connects nearby white pixels, merging letters into complete words or lines.

Can this method improve OCR accuracy? Yes, preprocessing helps OCR engines detect text more clearly and reduce misreads.

Is this method suitable for handwritten text? It works partly, but deep learning methods like CRNN perform better on handwriting.

What library can perform OCR after segmentation? Use pytesseract or EasyOCR to convert segmented word images into text.

Why sort contours by Y or X axis? Sorting ensures that extracted words follow correct reading order.

How to deal with uneven lighting? Apply adaptive thresholding or CLAHE to normalize brightness before segmentation.

Can I use this for multi-column documents? Yes, detect each column separately before applying line segmentation.

Where are the output files saved? The final segmented image is saved to “c:/temp/segmentedBook.png”.

Conclusion We’ve just walked through a practical pipeline for extracting text from scanned books with Python and OpenCV .

Whether you’re digitizing archives, processing receipts, or building custom OCR systems, these preprocessing steps form the foundation of reliable text extraction.

Connect : ☕ Buy me a coffee — https://ko-fi.com/eranfeit

🖥️ Email : feitgemel@gmail.com

🌐 https://eranfeit.net

🤝 Fiverr : https://www.fiverr.com/s/mB3Pbb

Enjoy,

Eran