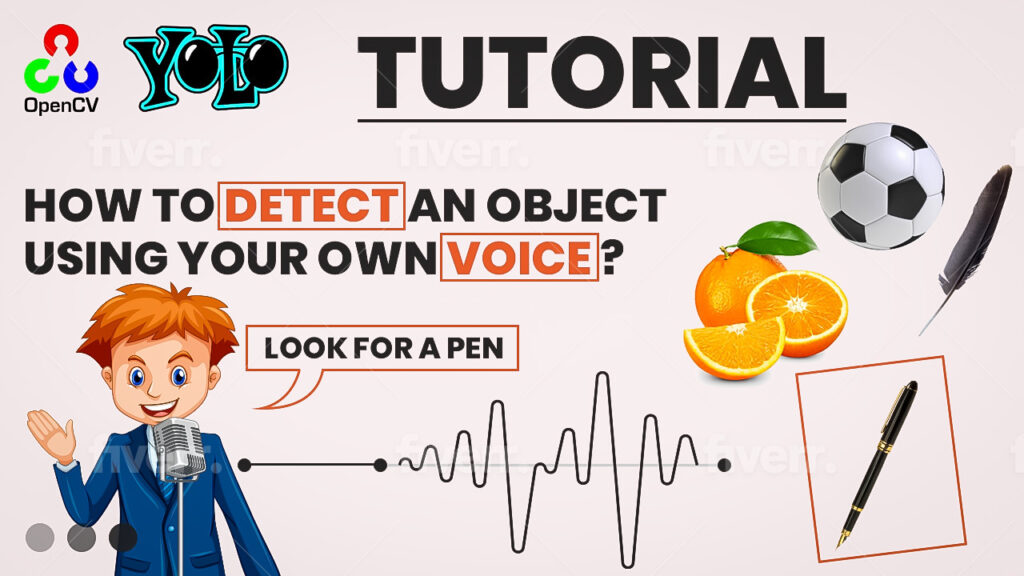

You imported all libraries, loaded YOLOv4-tiny into OpenCV’s DNN, read class labels, opened the webcam, and defined UI and audio parameters.

This primes the pipeline for high-speed opencv yolo object detection with subsequent voice control.

Here you build an interactive button overlay.

When the user left-clicks inside the button area, the app records a short audio clip, saves it, and converts it to a Speech Recognition-friendly format.

The recognized text becomes the filter term for highlighting detections.

### Define a mouse callback that records audio upon clicking inside the button area. def recordAudioByMouseClick(event, x, y, flags, params): ### Declare that we will modify the global flags inside this function. global ButtonFlag global LookForThisClassName ### If the left mouse button was pressed, check whether it is inside the button region. if event == cv2.EVENT_LBUTTONDOWN: ### Verify the click lies within the button’s bounding box. if x1 <= x <= x2 and y1 <= y <= y2: ### Provide console feedback for debugging. print("Click inside the button") ### Record a stereo audio snippet for the configured duration at the given sampling rate. myrecording = sd.rec(int(secods * fs), samplerate=fs, channels=2) ### Block until recording is complete so we can safely save the file. sd.wait() # wait until the recording is finished ### Write the recorded audio to a WAV file for later processing. write(audioFileName, fs, myrecording) # save the audio file ### Run speech-to-text on the newly recorded audio and store the transcribed text. LookForThisClassName = getTextFromAudio() ### Turn on the filter flag so matches will be highlighted during detection. if ButtonFlag is False: ButtonFlag = True ### If the click is outside the button, disable the filtering behavior for clarity. else: print("Click outside the button") ButtonFlag = False

This part converts audio to text, registers the mouse handler, and runs the main detection loop.

Each frame is fed to YOLOv4-tiny.

When a detection’s class name appears in your spoken text, the code highlights that object with a bounding box and label.

A visual “Record 3 seconds” button is drawn onto the frame for intuitive interaction.

### Convert recorded audio into a 16-bit PCM WAV and transcribe it using Google’s recognizer. def getTextFromAudio(): ### Read the recorded audio file with soundfile to inspect data and sample rate. data, samplerate = soundfile.read(audioFileName) ### Re-encode the audio as 16-bit PCM which SpeechRecognition expects for best compatibility. soundfile.write('c:/temp/outputNew.wav', data, samplerate, subtype='PCM_16') ### Create a recognizer instance to handle speech-to-text inference. recognizer = sr.Recognizer() ### Wrap the converted WAV in an AudioFile so the recognizer can read it. jackhammer = sr.AudioFile('c:/temp/outputNew.wav') ### Open the audio source context and load the entire clip into memory. with jackhammer as source: audio = recognizer.record(source) ### Use the default Google Web Speech API backend to recognize spoken words. result = recognizer.recognize_google(audio) ### Print the transcription for visibility and debugging. print(result) ### Return the recognized text to the caller so the UI can use it as a filter. return result ### Create a named window that will serve as the display target for frames and UI overlays. cv2.namedWindow("Frame") # set the same name ### Attach the mouse callback so clicks over the window trigger recording logic. cv2.setMouseCallback("Frame", recordAudioByMouseClick) ### Start the main application loop to process frames until the user exits. while True: ### Read a frame from the capture device; rtn indicates success. rtn, frame = cap.read() ### Run object detection on the current frame to obtain class IDs, confidence scores, and boxes. (class_ids, scores, bboxes) = model.detect(frame) ### You can inspect raw results during development if needed. # print("Class ids:", class_ids) # print("Scores :", scores) # print("Bboxes :", bboxes) ### Iterate over parallel lists of detections to draw and label regions of interest. for class_id, score, bbox in zip(class_ids, scores, bboxes): ### Unpack the bounding box as x, y for top-left and width, height for size. x, y, width, height = bbox # x, y is the left upper corner ### Retrieve the human-readable class label for the detected object. name = classesNames[class_id] ### Check if the spoken text contains this class label as a substring. index = LookForThisClassName.find(name) # look for the text inside a sring ### If filtering is enabled and the label appears in the transcription, highlight the box. if ButtonFlag is True and index > 0: ### Draw a rectangle around the matched detection with a custom color and thickness. cv2.rectangle(frame, (x, y), (x + width, y + height), (130, 50, 50), 3) ### Put the class name just above the box for readability. cv2.putText(frame, name, (x, y - 10), cv2.FONT_HERSHEY_COMPLEX, 1, (120, 50, 50), 2) ### Draw a filled UI button prompting the user to click and record a 3-second snippet. cv2.rectangle(frame, (x1, y1), (x2, y2), (153, 0, 0), -1) #-1 is filled cretangle ### Render readable button text to guide the user interaction flow. cv2.putText(frame, "Click for record - 3 seconds", (40, 60), cv2.FONT_HERSHEY_COMPLEX, 1, (255, 255, 255), 2) # white color ### Show the annotated frame in the display window named “Frame”. cv2.imshow("Frame", frame) ### Allow the user to quit the loop by pressing 'q' on the keyboard. if cv2.waitKey(1) == ord('q'): break ### Release the camera resource once the loop ends to free the device. cap.release() ### Destroy any OpenCV windows that were created during execution. cv2.destroyAllWindows()