Last Updated on 08/05/2026 by Eran Feit

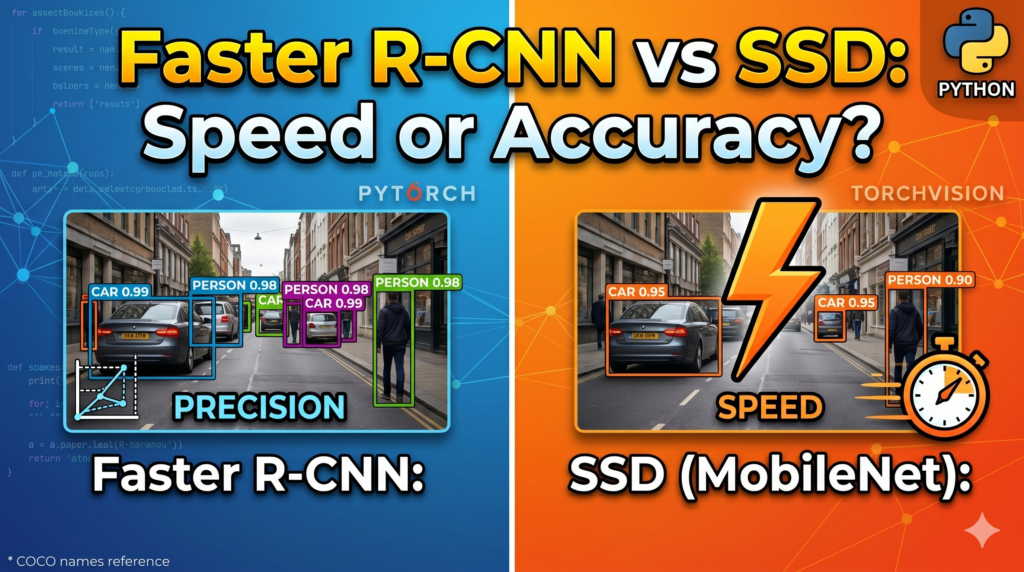

In the world of computer vision, choosing the right architecture is often a game of trade-offs between precision and performance. This guide provides a hands-on exploration of two foundational architectures used in modern AI development. We dive deep into the practical implementation of Faster R-CNN vs SSD PyTorch , contrasting the heavy-duty accuracy of two-stage detectors against the streamlined efficiency of single-shot models.

Navigating these models can be challenging when moving from theoretical research to real-world deployment. Understanding the nuanced differences between a high-precision backbone like ResNet50 and a lightweight alternative like MobileNet is essential for any developer looking to optimize a computer vision pipeline. This comparison clarifies when to prioritize a meticulous bounding box over millisecond-level inference speeds, ensuring your project meets its specific operational goals.

To bridge the gap between theory and execution, we walk through a complete technical setup using the latest PyTorch 2.5.1 environment. By providing the exact installation commands and Python scripts required to run both models, this tutorial removes the guesswork often associated with environment configuration and dependency management. You will see exactly how to load pre-trained weights and process images through each architecture side-by-side to observe the differences in output.

By the end of this tutorial, you will have a clear, data-driven perspective on which model fits your specific project needs. We move beyond simple definitions to show you the actual visualization for both detectors, ensuring you can confidently implement a Faster R-CNN vs SSD PyTorch solution that balances your unique requirements for detection quality and processing time.

Finding the Right Fit: Why the Faster R-CNN vs SSD PyTorch Debate Still Matters When building a vision-based application, it becomes clear very quickly that there is no single solution that fits every scenario. This is where the Faster R-CNN vs SSD PyTorch comparison becomes a critical part of the architectural decision-making process. Faster R-CNN belongs to the family of two-stage detectors, which first identifies regions of interest and then classifies them in a second step. This approach is the industry standard for projects where missing a small object or having an imprecise bounding box is not an option, such as in medical imaging or high-fidelity document analysis.

On the other side of the spectrum, Single Shot MultiBox Detectors (SSD) are engineered for environments where speed is the primary constraint. Instead of the multi-step process used by Faster R-CNN, an SSD performs detection and classification in a single pass over the image. This makes it the dominant choice for mobile applications, robotics, and edge devices where hardware resources are restricted. If the goal is to identify objects on a live camera feed at high frame rates, the lightweight nature of an SSDLite with a MobileNet backbone often makes it the preferred tool.

For developers and AI practitioners, the objective is to find the “sweet spot” where a model is accurate enough for the task but fast enough to remain functional for the user. Modern frameworks like PyTorch have made it simpler to swap these architectures, but understanding the underlying logic of each helps you anticipate how they will behave under different workloads. By comparing a ResNet50-based Faster R-CNN with a MobileNet-based SSD, we are examining the two most common ends of the performance spectrum, providing a comprehensive look at how modern object detection works in practice.

Faster R-CNN vs SSD PyTorch Setting Up Your Vision Pipeline: Accuracy Meets Efficiency To implement a professional-grade object detection system, we begin by bridging the gap between raw data and actionable intelligence through a structured Python environment. The primary goal of this implementation is to provide a versatile framework that allows you to toggle between high-precision detection and high-speed execution using the latest Faster R-CNN vs SSD PyTorch methodologies. By establishing a robust foundation with PyTorch 2.5.1 and CUDA 12.4, we ensure that the underlying hardware is fully leveraged to handle the intensive tensor computations required for deep learning.

The target of this code is to demonstrate how to load, transform, and run inference on images using two distinct neural network architectures. We start by initializing the Faster R-CNN model with a ResNet50 backbone, which serves as our benchmark for maximum accuracy. This model is designed to meticulously scan images for objects, making it ideal for tasks where every detail matters. The code walks through the essential preprocessing steps, such as converting images into tensors and adding batch dimensions, which are standard requirements for feeding data into modern computer vision models.

In addition to the high-precision route, the script incorporates an SSDLite model paired with a MobileNet v3 backbone. This represents the “speed” side of our comparison, specifically optimized for environments with limited computational overhead. The code illustrates how to switch between these models seamlessly, allowing you to observe how the same input image is processed differently in terms of bounding box placement and confidence scores. This high-level contrast is vital for understanding the operational limits of your deployment environment.

Finally, the tutorial focuses on the visualization of results, which is where the data truly becomes meaningful. By integrating the COCO category names, the script maps numerical labels back to human-readable strings, such as “dog” or “person.” We then employ Matplotlib to overlay bounding boxes and confidence percentages directly onto the original image. This final step is not just for display; it provides a visual audit of the model’s performance, helping you decide which architecture—Faster R-CNN or SSD—is the right choice for your specific real-world application.

Link to the tutorial here .

Download the code for the tutorial here or here

Link for Medium users here

Master Computer Vision

Follow my latest tutorials and AI insights on my

Personal Blog .

Beginner Complete CV Bootcamp

Foundation using PyTorch & TensorFlow.

Get Started → Interactive Deep Learning with PyTorch

Hands-on practice in an interactive environment.

Start Learning → Advanced Modern CV: GPT & OpenCV4

Vision GPT and production-ready models.

Go Advanced → Faster R-CNN ResNet50 Tutorial Accuracy vs. Speed: Comparing Faster R-CNN and SSDLite in PyTorch Building a professional computer vision application requires a deep understanding of the performance trade-offs inherent in different neural architectures.

In this guide, we dive into the practical implementation of Faster R-CNN vs SSD PyTorch , contrasting the heavy-duty accuracy of two-stage detectors against the streamlined efficiency of single-shot models.

By the end of this tutorial, our community will be able to navigate these models effectively when moving from theoretical research to real-world deployment.

We start by exploring the nuanced differences between a high-precision backbone like ResNet50 and a lightweight alternative like MobileNet within the Faster R-CNN vs SSD PyTorch ecosystem.

Understanding when to prioritize a meticulous bounding box over millisecond-level inference speeds is essential for any developer looking to optimize a vision pipeline.

This comparison clarifies those choices, ensuring your project meets its specific operational goals.

To bridge the gap between theory and execution, we walk through a complete technical setup using the latest PyTorch 2.5.1 environment.

By providing the exact installation commands and Python scripts required to run both models, this tutorial removes the guesswork often associated with environment configuration.

You will see exactly how to load pre-trained weights and process images through each architecture side-by-side.

Finally, we move beyond simple definitions to show you the actual visualization for both detectors.

This ensures you can confidently implement a Faster R-CNN vs SSD PyTorch solution that balances your unique requirements for detection quality and processing time.

Whether you are working on medical imaging or real-time robotics, the tools provided here will form the backbone of your next project

Setting Up Your Environment for Faster R-CNN vs SSD PyTorch Before we can start detecting objects, we need to ensure our development environment is perfectly tuned for deep learning tasks.

Creating a dedicated Conda environment allows us to isolate our project dependencies, preventing conflicts between different versions of libraries like PyTorch and OpenCV.

This step is the foundation of a stable and reproducible Faster R-CNN vs SSD PyTorch computer vision pipeline.

In this tutorial, we are using the cutting-edge PyTorch 2.5.1 along with CUDA 12.4 to leverage the full power of modern NVIDIA GPUs.

By installing specific versions of torchvision, torchaudio, and supporting packages like opencv-python and matplotlib, we guarantee that our detection scripts will run smoothly.

This configuration is optimized for both the accuracy of Faster R-CNN and the rapid inference of SSDLite.

Once the environment is active and the libraries are installed, your machine will be ready to handle complex tensor operations.

We also include pycocotools, which is vital for handling the COCO dataset format that our pre-trained models utilize.

With this setup complete, you are now equipped to transition from a clean slate to a high-performance Faster R-CNN vs SSD PyTorch workstation.

Why is environment configuration the first step for Faster R-CNN vs SSD PyTorch? Setting up a specific Conda environment with PyTorch 2.5.1 and CUDA 12.4 ensures that all library dependencies are compatible and that the code can access GPU acceleration, which is necessary for running the Faster R-CNN vs SSD PyTorch models efficiently.

Is it necessary to use a specific version of CUDA for this tutorial? Yes, using CUDA 12.X or 11.X ensures full compatibility with the pre-compiled PyTorch 2.5.1 binaries, allowing the code to access your GPU’s hardware acceleration for significantly faster object detection.

### Create a new Conda environment named Pytorch251 using Python 3.12 conda create -- name Pytorch251 python = 3.12 ### Activate the newly created environment to start installing packages conda activate Pytorch251 ### Check the installed NVIDIA CUDA compiler version to ensure hardware readiness nvcc -- version ### Install the specific versions of PyTorch, Torchvision, and Torchaudio compatible with CUDA 12.4 conda install pytorch == 2.5 . 1 torchvision == 0.20 . 1 torchaudio == 2.5 . 1 pytorch - cuda = 12.4 - c pytorch - c nvidia ### Install OpenCV for image processing and handling camera feeds pip install opencv - python == 4.11 . 0 . 86 ### Install Matplotlib for creating visualizations and plotting bounding boxes pip install matplotlib == 3.10 . 0 ### Install PyCOCOtools to work with the Common Objects in Context dataset format pip install pycocotools == 2.0 . 8 This part handles the critical preparation of your system to ensure the detection models can run without errors.

Loading the Precision Model: Faster R-CNN vs SSD PyTorch Comparison Now that our environment is ready, we can initialize our first heavyweight model: Faster R-CNN with a ResNet50 backbone.

This model is a “two-stage” detector, meaning it first proposes regions where objects might be and then classifies them.

This design makes it exceptionally accurate, though it requires more computational power than the single-shot alternatives found in our Faster R-CNN vs SSD PyTorch comparison.

In the code below, we load the default pre-trained weights, which have been trained on the massive COCO dataset.

Setting the model to eval() mode is a crucial step because it disables layers like Dropout and BatchNorm that are only intended for the training phase.

This ensures that our predictions remain consistent and accurate every time we process an image in this Faster R-CNN vs SSD PyTorch study.

We also use the Python Imaging Library (PIL) to open our test image, which serves as the raw input for our vision pipeline.

At this stage, the model is waiting in memory, fully loaded with the mathematical intelligence required to recognize eighty different object categories.

This is where the magic of deep learning begins to take shape as we prepare to compare Faster R-CNN vs SSD PyTorch .

Why do we need to call eval() before running the Faster R-CNN vs SSD PyTorch models? Calling the eval() method switches the model into evaluation mode, ensuring that normalization layers use the correct global statistics rather than batch statistics, which is necessary for making reliable predictions in our Faster R-CNN vs SSD PyTorch analysis.

Test Image :

train ### Import the Faster R-CNN model and its corresponding pre-trained weights from Torchvision from torchvision . models . detection import fasterrcnn_resnet50_fpn , FasterRCNN_ResNet50_FPN_Weights ### Load the default pre-trained weights for the Faster R-CNN ResNet50 model weights = FasterRCNN_ResNet50_FPN_Weights . DEFAULT ### Initialize the model with the loaded weights and enable a progress bar for the download object_detection_model = fasterrcnn_resnet50_fpn ( weights = weights , progress =True ) ### Set the model to evaluation mode to disable training-specific behaviors like Dropout object_detection_model . eval () ### Import the Image module from PIL to handle loading and opening image files from PIL import Image ### Open the target image file located in your local project directory test_img = Image . open ( " Best-Object-Detection-models/Faster-RCNN/Object Detection Using Faster-RCNN/train.jpg " ) ### Use the default OS viewer to display the image for a quick visual check test_img . show () Loading the pre-trained Faster R-CNN model allows you to leverage thousands of hours of training data instantly.

Data Preprocessing for Faster R-CNN vs SSD PyTorch Tasks Raw images are essentially matrices of colors, but deep learning models require a very specific mathematical format to function. We use torchvision.transforms to convert our PIL image into a PyTorch Tensor, which scales the pixel values and reorganizes the data.Faster R-CNN vs SSD PyTorch pipeline.

A common pitfall for beginners is forgetting that PyTorch models expect a “batch” of images, even if you are only processing one. By using unsqueeze(dim=0), we add an extra dimension to our tensor, effectively telling the model how to read the data. This is a standard requirement for both architectures in our Faster R-CNN vs SSD PyTorch tutorial.

In this section, we also print the shape of our tensors to verify that the transformation was successful. Once the tensor is ready, we pass it through the object_detection_model to receive a list of predicted bounding boxes, labels, and confidence scores. This process reveals the raw results of our Faster R-CNN vs SSD PyTorch inference before we apply any visualization filters.

What does the unsqueeze operation actually do to the Faster R-CNN vs SSD PyTorch input? The unsqueeze operation adds a singleton dimension at index zero, transforming a 3D image tensor into a 4D batch tensor of shape (1, 3, H, W) to meet the input requirements of the Faster R-CNN vs SSD PyTorch models.

### Import the transformation tools to convert raw images into numerical tensors import torchvision . transforms as transforms ### Define a composition of transforms that converts a PIL image into a PyTorch tensor transform = transforms . Compose ([ transforms . ToTensor (), ]) ### Apply the defined transformation to our loaded test image test_img_tensor = transform ( test_img ) ### Print the resulting tensor and its shape to verify the numerical conversion print ( test_img_tensor ) print ( test_img_tensor . shape ) ### Add a batch dimension at index zero to prepare the single image for model input test_img_tensor = test_img_tensor . unsqueeze ( dim = 0 ) ### Print the new shape to confirm the addition of the batch dimension print ( " Add batch dimension : " ) print ( test_img_tensor . shape ) ### Pass the image tensor through the model to generate object detection predictions preds = object_detection_model ( test_img_tensor ) ### Display the raw dictionary of prediction results including boxes and scores print ( " Predictions Results : " ) print ( preds ) This preprocessing step is vital for ensuring your data matches the input layer requirements of the PyTorch detection models.

Visualizing Accuracy in the Faster R-CNN vs SSD PyTorch Framework Once the model generates its predictions, we need a way to interpret the results and see them visually.

We load the coco.names file, which contains the labels for the 80 different categories the model was trained to recognize.

This allows us to label our Faster R-CNN vs SSD PyTorch outputs with human-readable names like “car” or “person.”

To visualize the detection, we use Matplotlib to draw the image and overlay the predicted bounding boxes.

However, since the model might detect hundreds of objects with low confidence, we implement a CONFIDENCE_THRESHOLD. Faster R-CNN vs SSD PyTorch run.

The final visualization script handles the conversion of tensors back into a format that Matplotlib can display.

We rearrange the dimensions from (C, H, W) back to (H, W, C) and detach the data from memory.

This resulting plot provides a clear look at how our Faster R-CNN vs SSD PyTorch comparison actually looks in a real-world setting.

How do we filter uncertain results in the Faster R-CNN vs SSD PyTorch visualizer? We iterate through the scores returned by the models and only draw bounding boxes for detections where the confidence exceeds a threshold of 0.5, effectively filtering noise from our Faster R-CNN vs SSD PyTorch output.

### Open the text file containing the COCO dataset category names with open ( " Best-Object-Detection-models/Faster-RCNN/Object Detection Using Faster-RCNN/coco.names " , " r " ) as f : COCO_INSTANCE_CATEGORY_NAMES = f . read (). splitlines () ### Print the list of category names to ensure they were loaded correctly print ( COCO_INSTANCE_CATEGORY_NAMES ) ### Import Matplotlib's plotting and patch tools for visual rendering import matplotlib . pyplot as plt import matplotlib . patches as patches ### Convert the image tensor back to a NumPy array and remove the batch dimension img = test_img_tensor . squeeze (). detach (). numpy () ### Transpose the image dimensions from CxHxW to HxWxC for correct Matplotlib display img = img . transpose (( 1 , 2 , 0 )) ### Initialize a figure and axes for the final detection plot fig , ax = plt . subplots ( 1 , figsize = ( 12 , 9 )) ### Display the processed image on the Matplotlib axes ax . imshow ( img ) ### Set a confidence threshold to only visualize highly certain detections CONFIDENCE_THRESHOLD = 0.5 ### Loop through the predicted boxes, labels, and scores to draw the results for box , label , score in zip ( preds [ 0 ][ ' boxes ' ], preds [ 0 ][ ' labels ' ], preds [ 0 ][ ' scores ' ]): if score > CONFIDENCE_THRESHOLD : ### Extract the coordinates for the bounding box corners x1 , y1 , x2 , y2 = box . detach (). cpu (). numpy () ### Map the numeric label to its corresponding COCO class name label_name = COCO_INSTANCE_CATEGORY_NAMES [ label . item ()] ### Create a red rectangle patch based on the box coordinates rect = patches . Rectangle (( x1 , y1 ), x2 - x1 , y2 - y1 , linewidth = 1 , edgecolor = ' r ' , facecolor = ' none ' ) ### Add the bounding box rectangle to the plot axes ax . add_patch ( rect ) ### Place the label and confidence score text above the detected object plt . text ( x1 , y1 , f ' { label_name } { score . item () :.2f } ' , color = ' white ' , fontsize = 8 , bbox = dict ( facecolor = ' red ' , alpha = 0.5 )) ### Remove the axis markers for a cleaner visual output plt . axis ( ' off ' ) ### Display the final annotated image to the screen plt . show () Visualizing the Faster R-CNN results highlights its strength in precision and accurate box placement.

Here is the result ( faster rcnn output ) :

faster rcnn output PyTorch Object Detection Tutorial Lightning Fast Detections with SSDLite and MobileNet To see the other side of the performance coin, we now implement the SSDLite model with a MobileNet v3 backbone. While Faster R-CNN is a heavyweight precision tool, SSDLite is designed for pure speed and efficiency. It processes the entire image in a single pass, which significantly reduces the time it takes to get a result, making it the perfect choice for mobile devices or live video streams.

The implementation follows a nearly identical pattern to our first model, which highlights the flexibility of the PyTorch ecosystem. We load the pre-trained weights for ssdlite320_mobilenet_v3_large and set it to evaluation mode. Because we have already preprocessed our image tensor, we can pass it directly into this new model without any extra transformation steps.

When you run this section, you will notice the speed at which the preds are generated. While the bounding boxes might be slightly less precise than those from Faster R-CNN, the trade-off is often worth it for real-time applications. This comparison allows you to see firsthand how architectural choices impact the final user experience in a computer vision app.

What makes SSDLite faster than Faster R-CNN in a production environment? SSDLite is a single-stage detector that predicts bounding boxes and class probabilities in one go, avoiding the expensive region proposal step used by Faster R-CNN, which results in much lower latency.

### Import the lightweight SSDLite model and its pre-trained weights from torchvision . models . detection import ssdlite320_mobilenet_v3_large , SSDLite320_MobileNet_V3_Large_Weights ### Load the default pre-trained weights for the SSDLite MobileNet model weights = SSDLite320_MobileNet_V3_Large_Weights . DEFAULT ### Initialize the SSDLite model with weights and enabled progress tracking object_detection_model = ssdlite320_mobilenet_v3_large ( weights = weights , progress =True ) ### Set the SSD model to evaluation mode for inference object_detection_model . eval () ### Generate detection predictions using the existing image tensor preds = object_detection_model ( test_img_tensor ) ### Print the prediction dictionary for the SSD model results print ( preds ) ### Convert the tensor back to NumPy for visualization of the second model img = test_img_tensor . squeeze (). detach (). numpy () img = img . transpose (( 1 , 2 , 0 )) ### Create a new figure to display the SSD detection results fig , ax = plt . subplots ( 1 , figsize = ( 12 , 9 )) ax . imshow ( img ) ### Use the same threshold to ensure a fair comparison between the two models CONFIDENCE_THRESHOLD = 0.5 ### Overlay the SSD bounding boxes and labels onto the test image for box , label , score in zip ( preds [ 0 ][ ' boxes ' ], preds [ 0 ][ ' labels ' ], preds [ 0 ][ ' scores ' ]): if score > CONFIDENCE_THRESHOLD : x1 , y1 , x2 , y2 = box . detach (). cpu (). numpy () label_name = COCO_INSTANCE_CATEGORY_NAMES [ label . item ()] rect = patches . Rectangle (( x1 , y1 ), x2 - x1 , y2 - y1 , linewidth = 1 , edgecolor = ' r ' , facecolor = ' none ' ) ax . add_patch ( rect ) plt . text ( x1 , y1 , f ' { label_name } { score . item () :.2f } ' , color = ' white ' , fontsize = 8 , bbox = dict ( facecolor = ' red ' , alpha = 0.5 )) ### Finalize the plot and show the high-speed detection results plt . axis ( ' off ' ) plt . show () Implementing SSDLite gives you the speed required for modern, responsive vision applications on the edge.

Here is the result ( ssd output ) :

ssd output Summary of the Comparison In this tutorial, we successfully established a modern PyTorch environment and implemented two of the most popular object detection architectures. We saw how Faster R-CNN excels in providing high-precision bounding boxes at the cost of higher latency. Conversely, we demonstrated how SSDLite offers a rapid alternative for real-time needs by simplifying the detection process. Understanding this balance is the key to building successful AI products that meet both technical and user requirements.

FAQ What is the main difference between Faster R-CNN and SSD? Faster R-CNN is a two-stage detector that prioritizes high accuracy, while SSD is a single-stage detector optimized for maximum inference speed.

Which model is better for real-time video detection? SSDLite is significantly better for real-time tasks because its single-shot architecture allows it to process frames much faster than Faster R-CNN.

Why is a GPU recommended for these PyTorch models? GPUs use parallel processing to handle the massive tensor computations required by deep learning, resulting in much faster detection compared to a standard CPU.

What does the eval() function do in the code? It sets the model to evaluation mode, disabling training-specific features like Dropout and ensuring normalization layers use global statistics for consistent predictions.

How do I detect custom objects not in the COCO dataset? You must perform fine-tuning, which involves training the pre-trained model on your own labeled dataset containing the new object categories.

What is a “two-stage” detector? A two-stage detector like Faster R-CNN first identifies potential regions of interest and then classifies those regions in a separate second step for higher precision.

Is SSDLite compatible with mobile devices? Yes, SSDLite is specifically designed for mobile and edge deployment because it uses lightweight backbones like MobileNet v3 to minimize computational overhead.

What is the purpose of the confidence threshold? It filters out low-probability detections, ensuring that only objects the model is highly certain about are displayed on the final image.

Can I use PyTorch 2.5.1 with older CUDA versions? It is best to match PyTorch 2.5.1 with CUDA 12.4 as specified in the tutorial to ensure full hardware acceleration and library compatibility.

What are pre-trained weights? Pre-trained weights are the “knowledge” of a model that has already been trained on a large dataset like COCO, allowing you to detect objects immediately without training from scratch.

Conclusion Choosing between Faster R-CNN and SSD is one of the most important decisions in the lifecycle of an AI project. As we have demonstrated, there is no “perfect” model, only the right tool for the specific job at hand. If your visitors require clinical precision where every bounding box must be exact, Faster R-CNN remains the undisputed champion. However, for the growing world of edge computing and real-time monitoring, SSDLite provides the agility needed to stay responsive. By mastering both, you empower yourself to build smarter, faster, and more reliable computer vision systems.

Connect : ☕ Buy me a coffee — https://ko-fi.com/eranfeit

🖥️ Email : feitgemel@gmail.com

🌐 https://eranfeit.net

🤝 Fiverr : https://www.fiverr.com/s/mB3Pbb

Enjoy,

Eran